Submit to RepoGenesis

Guidelines for contributing your model's results to the RepoGenesis leaderboard.

Evaluating on RepoGenesis

Check out the main RepoGenesis repository docs for instructions on how to generate and evaluate predictions on RepoGenesis [Verified, Full, Verified (Without Docker)].

RepoGenesis evaluation can be carried out either locally or via cloud compute platforms with our eval_harness tool (Recommended).

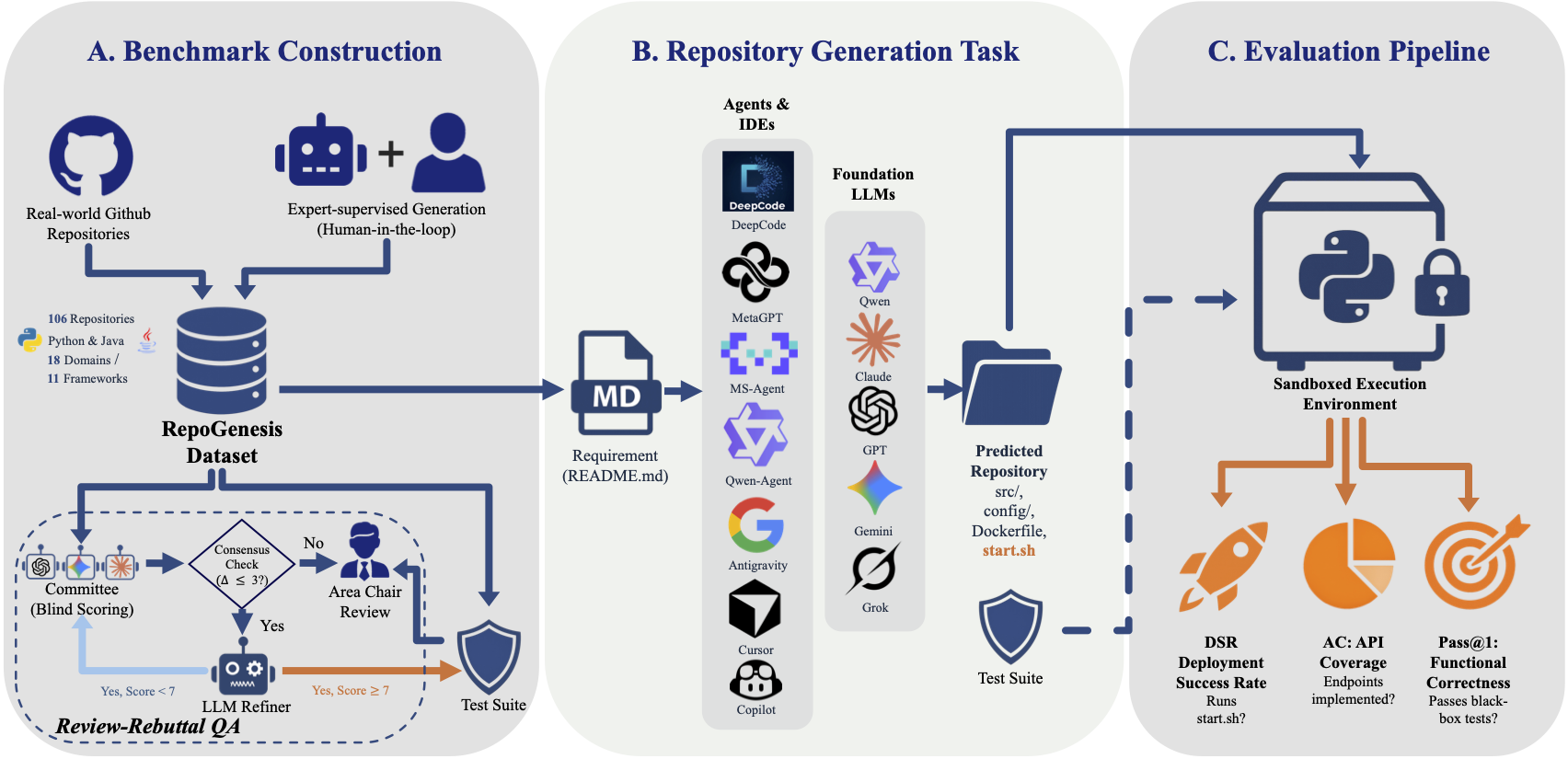

The evaluation harness uses Docker containers to deploy generated microservice repos, run test suites (Pass@1), measure API coverage (AC) via static analysis, and verify deployment success (DSR).

Submit to Leaderboard

If you are interested in submitting your system or model to any of our leaderboards, please follow the instructions posted at RepoGenesis/experiments.

Submissions should include:

- Generated repository code for all benchmark repos

- Evaluation results JSON from the eval_harness

- A brief description of your system/model and configuration

- Date of generation and model version information

RepoGenesis

RepoGenesis